Note: this post isn’t much longer than a usual Bibliophilia post, but it has a lot of pictures and might get cut off in your email browser. For best results, read it on Substack.

This person does not exist. Neither does this child, or this model. The images were generated by a computer program. If you’ve been following artificial intelligence for a while, you’re probably already familiar with This Person Does Not Exist, the website that uses Nvidia’s StyleGAN rendering software to randomly generate photorealistic human faces for years. The thing is, the face-rendering version of StyleGAN was trained on hundreds of thousands of pictures, exhaustively trained to understand faces. The portrait above was made with OpenAI’s DALL-E 2, which didn’t know anything about human faces until somebody asked it to draw a picture of one. A few moments later, the program had crawled through thousands of images of human faces and sketched a photorealistic portrait that best matched the user’s request. In technical terms, StyleGAN is a “many-shot” program, while DALL-E is a “zero-shot” program. To put it plainly, StyleGAN can only do one thing well; DALL-E can do anything. This means that you can ask the program to draw a realistic child, a sci-fi android, or both:

Like a lot of faces DALL-E 2 and StyleGAN put out, the likeness isn’t perfect. The figure’s eyes aren’t quite symmetrical, nor do the reflections of light in its irises. The shading around the brows are also off. The program could do better. What’s frightening is that it did so well, it had no prior training, and it did this in seconds, churning out a dozen similar variants at the same time.

Here is the cover of this month’s issue of Cosmopolitan, also drawn with DALL-E 2:

Professional illustrators are very nervous right now. So are fact-checkers, art teachers, and IP lawyers. Access to DALL-E 2 is tightly controlled by OpenAI, and the list of forbidden subjects for the program (public figures, violence, porn) is deservedly long, but there’s no way the software, or something just as good, will soon be available to the public.

Of course, AI won’t stop at making pretty pictures. After a few years of slowly transforming smaller, unsexy areas of finance, accounting, calculation, aggregation, metadata, and targeted advertising, AI is now coming after everything. Massive, highly-adaptive programs will disrupt huge swathes of our economy and culture, changing how we work, create, communicate, and play.

Whether or not this will lead to the end of the world or the dawn of a techno-utopia is beyond my ken, but as somebody with a documented interest in technology’s impact on literature, I want to think through the possibilities of AI and writing. Literature has had its own DALLE-style shocks in the last year, and there’s no reason to think the advances won’t keep happening. I will source as many claims and predictions as possible, with the caveat that like all technology forecasting, at least one idea will turn out to be eerily prescient, two more will be laughably wrong, and the rest will muddle along between the two extremes.

What is AI, anyway?

There’s no generally-accepted definition of artificial intelligence and never has been. Unless you work in the field, you probably have the vague, sci-fi-inflected notion of robots with feelings that I do when I hear the term. Despite what recent headlines would have you think, we probably won’t have sentient machines with minds like living creatures anytime soon, or ever.

It’s better to think of AI as plain old computer programs with a crucial difference: AI uses feedback. Its outputs can be turned back into inputs, usually based on whether or not they satisfy a pre-set goal. The classic example from cybernetics is a thermostat, which operates in a continuous loop of comparing a target temperature against the room, heating or cooling to adjust, then monitoring again. The AI that runs DALLE-2, or YouTube’s recommendation engine, or the writing programs I’m looking at here is more sophisticated by orders of magnitude, but it does all eventually come down to a set of targets to meet, a way to monitor progress, and a correction mechanism to get closer to the target.

Most AIs that write are natural language processors, or NLPs. These programs, like Google’s PaLM and OpenAI’s GPT-3 build up a storehouse of knowledge on language by reading trillions of words culled from the internet, magazines, and books. The more language they read, the better they can predict how a sentence, paragraph, or chapter will develop. Like most AI, these NLPs can’t write on their own, without some kind of prompt to match. Everything they do is essentially imitative, whether they’re mimicking your texting style or the verse styles of Dr. Seuss. This has some weird philosophical implications, discussed below.

What can AI already do with writing?

AI has already been creeping into journalism and literature for half a decade. In certain limited contexts with highly formulaic structures, like stock reports and sports recaps, AI already does a decent job. Forbes, The Washington Post, and Bloomberg, for instance, use AI programs for simple reports on scores, stocks, and election tallies that only require the machine to plug numbers into pre-made templates. This also works well with customer service: you’ve probably swatted away several AI customer-service chatbots this week. AI programs are also getting pretty good at poetry, especially in very strict forms like haiku, though they still have trouble making sense.

With fiction, there’s been less progress. One AI almost won a literary competition with a novel, though it didn’t do this in one giant leap: it was fed prompts, words, and scenarios by a team of researchers, who stitched together hundreds of fragments into a more coherent novel. They also stacked the deck in the machine’s favor somewhat by framing the story as a newly-sentient computer trying to write its first novel. Any errors in tone or consistency could be passed off as a faithful rendering of the narrator’s voice. Still it’s impressive, and highlights the kind of writing that’s being done now with AI as a tool, rather than an independent writer.

This kind of collaborative use is becoming more common. Chandler Klang Smith, for example, feeds parts of her novels to SudoWrite, asking it to complete a sentence or paragraph however it likes. Even if most of its suggestions are drivel, after enough attempts it can come up with a workable phrase or idea to get her back on track. “It truly does remind me,” she says, “of the—all too rare—occasions when I’ve solved a writing problem with a dream… except the AI dreams on command.” Not many novelists have admitted yet to using AI at all, but if it does catch on, it’ll probably start in this way.

But AI is also leading towards new kinds of hybrid literature written jointly by humans and software. Text-based role-playing game platforms like Novel AI and AI Dungeon function like a game-master and narrator, generating people, places, and events for players to respond to. Presets and options allow you to fiddle with the writing software, encouraging it to write in certain ways or towards certain goals, though I haven’t tried it myself. A quick tour of the NovelAI reddit suggests that the system still regularly flubs its lines and forgets where it’s going, but as the NLPs powering these platforms improve, it might not be long before we have something like an epic fantasy novel that writes itself, real-time, in reaction to you and your friends.

More than anybody else, the writer/engineer K Allado-McDowell has tried to stake out a claim at the frontier of AI-writer collaboration today. Their first book, 2021’s Pharmako AI, is a kind of epistolary exchange between McDowell and GPT-3. McDowell’s approach was to feed GPT-3 source texts to flavor its style and references, then start chatting with it and molding the most interesting responses into dialogues. The results are bizarre, free-associative conversations about free will, biology, futurism, and other topics a programmer-artist employed by Google would be into. Pharmako AI’s version of GPT-3 is essentially an Esalen guru, only even more incomprehensible. I don’t love the result, but the process is fascinating, and will probably be increasingly prominent in AI literature.

AI is also starting to help out on the reading side of literature, too. NLPs can read even better than they write, and this is starting to make an impact in research and publishing. Ben Blatt made some waves a few years ago with Nabokov’s Favorite Word is Mauve, which rounded up some of the most interesting literary insights yielded so far by computer-based statistical analysis of literature and language. For instance, computer analysis of medieval English literature has proved what scholars long suspected: Shakespeare didn’t actually “invent” thousands of words, but simply used obscure words more often than his colleagues. This was hard to catch until more manuscripts were scanned into our language databases and programs were instructed to cross-check them against the Bard.

Publishers have tried using these programs to better market their books. A few years ago a pair of researchers promised a "Bestseller Code" for making profitable novels, though this hasn’t yet launched a golden age of popular literature algorithmically-calibrated to please readers. I haven’t read the book, but Jia Tolentino says that the research mostly tells us what we already know about bestsellers. I tried looking around for more sources on publishing and AI, but most of it, like this pamphlet, seems to revolve around this kind of metadata-gathering. This may turn out to be a prelude to greater software that figures out what to do with all this data, but these are early days.

This is, from what I’ve seen, the state of things right now with literature and AI. Much of it is promising, some of it is overhyped, and most of its impacts are still unknown. Still, I agree with Stephen Marche’s claim that if AI is like cinema, we’re much closer to the nickelodeon-novelty era than we are to 2001: A Space Odyssey. If the technology keeps getting better–and there’s every reason to believe that it will–AI will lead to entirely new ways of reading and writing we can barely imagine.

The Wave of the Future

To put this as simply as I can, I believe that as it improves, AI will change writing (and other arts) as much as the camera changed painting. Photography, famously, terrified and fascinated painters when it emerged in the 19th century. Here was a device that could do in seconds what painters did in hours, at a fraction of the cost. The simple version of the history has painters wailing and gnashing their teeth for a few decades while photography destroys their economic base. Then Impressionism comes along to usher in abstract art, which can’t be photographed, and give all the artists something to do again. This will probably be somewhat true for the arts as AI encroaches: any tasks that can be easily automated will quickly stop being remunerative for writers, composers, and illustrators.

This isn’t the whole story of cameras and painting, though. It’s not a coincidence that Realism, as an artistic movement, also emerged in Europe and North America around the same time as the invention of the camera. Many painters actually used photography as a tool, allowing them to capture details that were too big, too small, too slow, or too fast to easily paint. The Wave (1870) would have been difficult for Gustave Corbet to paint if he didn’t have photographs to work with.

The first improvements to writing in the near future will let us all be little keyboard Courbets, letting us write with greater efficiency and less trouble. AI assistants could become spell-checkers on steroids, editing our documents for us to change verb tenses or perspectives, flag inconsistencies in meaning or style, and root out cliches or repetitions. Imagine being able to highlight a paragraph and generating a graph, outline, or illustration from it. Next to the spell-check, there would be a fact-check button, checking any claims in the text against a reliable source from the internet. (This would also make for a great browser extension.)

A lot of drudgery in writing will disappear. If you regularly use Gmail or Outlook, you’ve probably seen their auto-suggestion software expanding its reach in recent years, offering to finish your sentences and sign-offs automatically based on predictive algorithms. So far, these programs mostly automate stock phrases and general statements, but in the near future we might see these programs grow increasingly bold in the scope and scale of their suggestions. How many work emails could be automated? If your inbox is anything like mine, it’s probably a lot. Business correspondence as we know it might disappear into a cloud of AI assistants chatting with each other, exchanging calendar information, declining invitations, monitoring progress, and coordinating meetings as needed.

This raises certain problems and opportunities for the future of non-fiction. Any NLPs sophisticated enough to do what I described in the last paragraph will probably also be good enough to read and summarize articles, reports, and websites, and books for us. Taken far enough, this would lead to a very weird kind of writing specifically meant for AI. Imagine a Robot Wikipedia that contains exhaustive, highly technical explanations of every topic under the sun, ultimately produced in order to be digested and summarized by artificial intelligence.1

With fiction, the most urgent question is whether it can be fully automated. Much depends on how good future NLPs get at logic. Right now, AI has trouble understanding motivation, plot developments, and consistency from scene to scene. This is why the few attempts to let NLPs write fiction by themselves end in bizarre, meandering, plotless rants.

If logic programs like Google’s PaLM are any indication, though, AI is well on the way to vaulting this hurdle. Then, all bets are off. As with poetry, the more formal something is, the easier it is to crack: the first genres AI figures out might be Greek tragedies or detective stories–put in a tragic reversal here, a goon with a blackjack up his sleeve there, resolve all plots on the last three pages, and call it a draft. Someday we might even be able to automate aimless, free-indirect discourse realist fiction about the problems of sensitive Brooklynites.

Whether or not this is actually possible is a very open question. Whether or not we should desire it, though, is urgent. On the one hand, there would be something miraculous about getting whatever spinoffs and sequels we want, or to explore any cockamamie concept into a novel and see if it works. This might come, though, as the cost of what Erik Hoel has memorably called the Semantic Apolcalypse:

Let me make my concern as clear as possible. Imagine a future website where every time you click refresh a new and perfect Shakespeare sonnet is generated on the page in front of you. And you click again and again and again and again. Imagine then, your dread.

I share Hoel’s concern. For one thing, perfect NLPs would give me the nagging, unavoidable feeling that literature itself–the great human storehouse of myths and mystery, imagination and identity, excitement and emotion–is a joke, a game of imitation and repetition so simple a computer can do it. If it did happen, we’d probably have a period of wild novelty, then a rapid exodus away from traditional forms and towards the human-AI frontier. Taking three years to write a novel that machines can make in seconds would be perverse, like hand-crafting a 2009 Honda Civic from scratch.

Stephen Marche might be onto something when he says that writers of the futures will be like hip-hop DJs and remix artists. It’s worth quoting in full:

Hip hop also demanded an entirely new musicality to maximize the effects of the innovation. Building beats and sampling required a comprehensive musical knowledge. The best DJs had the widest access to music of all kinds, and were each, in a sense, archivists. They engaged in “raids on the past,” using history for their own purposes.

Just as hip hop artists developed a consummate familiarity with earlier forms of popular music, the artists of artificial intelligence who use large language models will need to understand the history of the sentence and the development of literary style in all forms and across all genres. Linguistic AI will demand the skills of close reading and a historical breadth as the basic terms of creation.

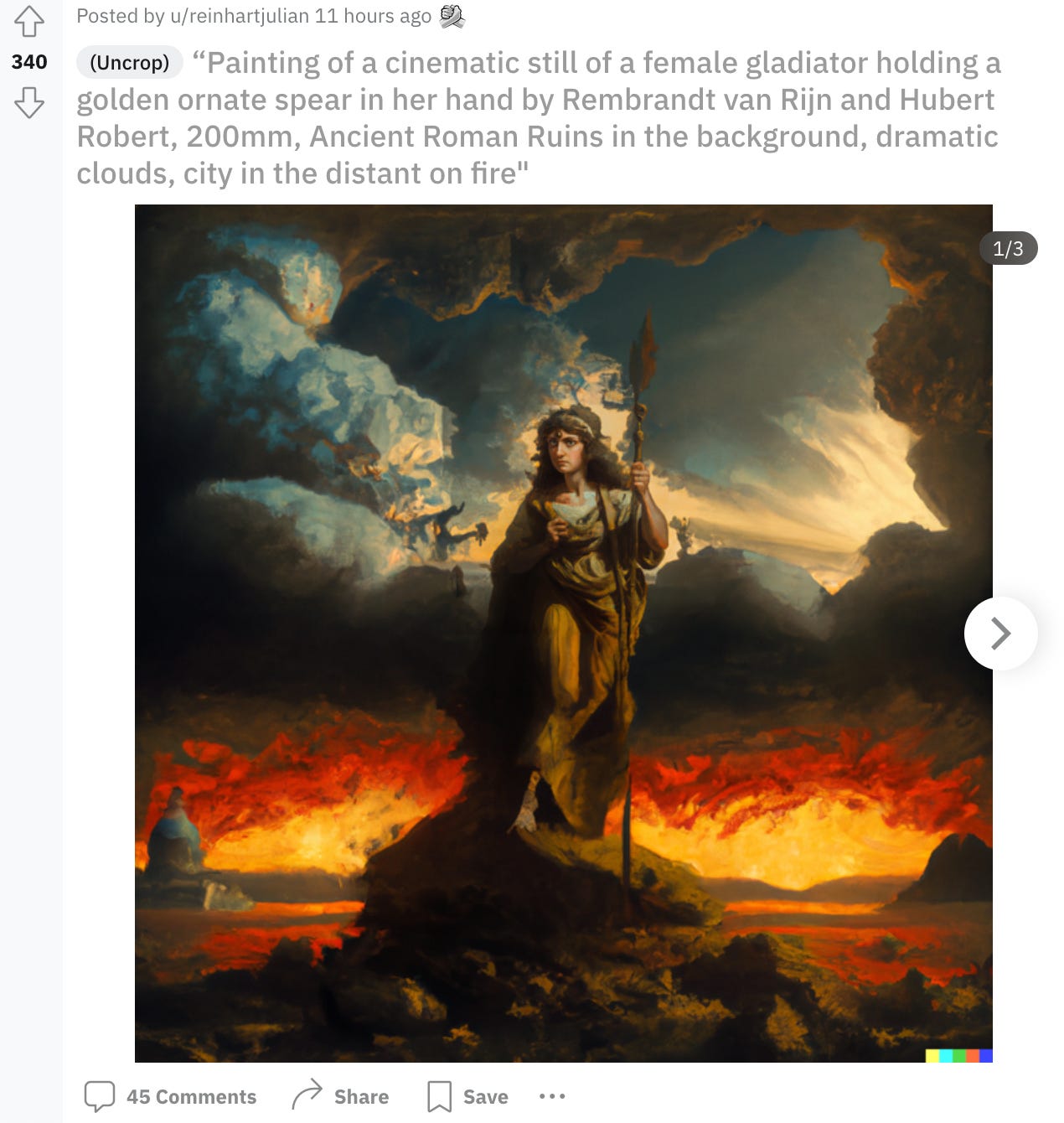

To bring things full-circle, I think this tracks with what we’re already seeing in visual art with DALL-E. In the days that I’ve been writing this, I’ve made a habit of checking the DALL-E Reddit page where authorized users are playing with the program and seeing what it can do. My Twitter feed has also rapidly filled up with memes generated by DALL-E Mini, a kind of bargain DALL-E for the rest of us. Like any other creative medium that exists entirely on social media, it’s been the usual mix: mostly derivative, occasionally funny, and rarely brilliant. A huge proportion of people, faced with a program that can do potentially anything, have mostly used it so far to place Darth Vader and Homer Simpson in incongruous situations. The best DALL-E users are the ones who give it the most unusual prompts, or ask for something in particular styles, and understand which versions (DALL-E always generates many) are the most appealing.

The real writers of the AI future, if we live to see it, will be masters of context, collage, and connection. They will conduct dialogues with the dead, animate the spirits of internet forums or corporations into characters, and build bizarre amalgamations of ideas into new philosophies. They will know how to craft the right prompts, ask the right questions, cultivate the results, and find communities to share them in. Who knows what kinds of insights we can gather when we have cameras taking snapshots of the language for us? And who knows what kind of Impressionism, beyond the reach of the machines, might emerge?

But this post is getting long, and we’re getting dangerously close to senselessness. One thing I can say for sure: AI writers will not be conscious. It sure would look cool if they were, though.

Meanwhile, on Musement

The Musement blog rolls on. Here’s what you may have missed:

Hugh Kenner on Bloomsday, and Ulysses at 100

The Logic Piano, and thoughts on early computers

The Hieroglyph Snail, possible mascot for this blog

Digital Natives, and some light ranting on that old chestnut.

Last week also marked the first anniversary of this newsletter. Happy birthday, Bibliophilia! This is one of the most satisfying writing projects I’ve ever undertaken, and one that I’m enormously proud of. I’m going to keep at this for as long as I can. I hope you’ll join me for another year of bookish pursuits.

That’s all. Happy reading!

(Of course, we’re already on the way to AI that can do this now using the Internet, but one AI-future topic I definitely don’t have time to discuss here is the impending tidal wave of AI junk. Suffice it to say that the more AI-generated text an NLP reads, the sloppier and less reliable its output becomes.)